The passion quotient

You’ve never heard of the passion quotient? That’s because I just made it up. For example, if 5 authors report that they use Tool X and it is very important (5 on a scale from 1 to 5), then Tool X scores a perfect 5 PQ.

The formula is this:

PQ = ((# of authors at importance 5 * 5) + (# of authors at importance 4 * 4) + …) / total # of authors

In other words, we are looking for the tool for which the importance is ranked the highest.

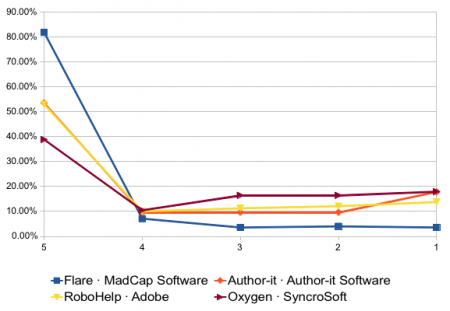

Joe Welinske and WritersUA recently published their annual tools survey. In this survey, the PQ winner, by a lot, is MadCap Flare, which scored a 4.60 PQ. Of 225 respondents, 184 gave Flare the Very Important (5) rating.

I am fascinated by the fact that Flare scores so highly, especially compared to other authoring tools (in descending order):

- Adobe FrameMaker, 3.79

- Adobe RoboHelp, 3.76

- Author-it, 3.71

My initial theory was that Flare is an all-in-one tool, so it might get a higher ranking than FrameMaker/RoboHelp (where the votes might be split), but notice that Author-it, which is also an all-in-one solution, has a PQ very similar to FrameMaker/RoboHelp and not to Flare. I await enlightenment from my readers.

Meanwhile, I noticed that oXygen and XMetaL have the same number of responses (67) and scored almost identically on the PQ (3.36 and 3.37, respectively).

So then, I graphed the results by percentage that reported each level of importance.

| Tool | 5 | 4 | 3 | 2 | 1 |

|---|---|---|---|---|---|

| Flare · MadCap Software | 81.78% | 7.11% | 3.56% | 4.00% | 3.56% |

| Author-it · Author-it Software | 53.42% | 9.59% | 9.59% | 9.59% | 17.81% |

| RoboHelp · Adobe | 52.92% | 10.00% | 11.25% | 12.08% | 13.75% |

| Oxygen · SyncroSoft | 38.81% | 10.45% | 16.42% | 16.42% | 17.91% |

For clarity, I omitted FrameMaker, which has numbers very similar to RoboHelp, and XMetaL, whose numbers are similar to Oxygen.

What conclusions, if any, can we draw from this information?

Kai

Full disclosure: Of the mapped tools, I’ve only ever used Flare. It’s also the only software of which I’d call myself a gushing fan-boy, so go figure… I’ve never been offered or received any favours from MadCap, except for a free t-shirt.

Personally, I can identify three reasons why Flare scores so highly.

(1) The “Bloomberg” effect: Similar to Bloomberg’s financial terminals, Flare is both powerful and quirky enough to make its mastery an achievement to be proud of. Many users mention Flare’s steep learning curve, but the “view from the top”, if you will, pays off.

(2) The “Clinton” effect: Flare is the only software (corporate or retail) that makes me feel like it knows and respects the way I work. It feels my (past) pains and has good remedies for several of them.

(3) The “Avis” effect: MadCap succeeds in a story that combines the rhetorics of underdog and second chance. They try harder. They milked their RoboHelp roots for all they’re worth. And I just find MadCappers trustworthy as a company: They’re very open and up front about what Flare can and cannot do. Their responsiveness to questions and issues has been the same when we were a prospect as when we’re a customer now.

Cheers, Kai.

Mark Baker

Pure off-the-cuff speculation here, but I wonder if the differences may be based on perceived fitness to task. FrameMaker, for instance, may be purchased by default even where it is not the best tool for the task, whereas Flare may only be selected when it really is the best tool for the task.

XML tools, regardless of their actual fitness for the task at hand, may be seen as less fit for the task by writers who expect all authoring tools to work on DTP principles. They may also genuinely be less fitting for the task if the documentation process is still organized on DTP principles despite the adoption of XML.

In other words, the tools may not have scored based on how well they work for the task they were designed for, but for how well they were chosen for the task they were actually used for.

Nancy Thompson

I’m guessing that other people may have responded to the survey question as I did. If I use that tool (and only that tool) and I can’t do my work without it, I rate it a 5 in importance. If I use that tool along with other tools I am asked to rate in the list, I may give one tool a 4 and another tool a 3, depending on how important they are in doing my work overall. If I’m not alone in this interpretation, it may be the result of a poorly worded survey question and not the kind of data WritersUA intended to collect.

Sarah O'Keefe

@Nancy, I think your interpretation makes sense. But it’s not necessarily a flaw — the implication is that people find Flare to be uniquely important. What I don’t understand is why similar tools don’t score a similar number.

@Kai, I think you may be on to something in that Flare users do seem to be a loyal bunch.

@Mark, this would imply that Flare is doing a better job of drawing in the “correct” users — people who really need what the tool offers — in comparison to other tools.

All of these theories seem reasonable to me. Do I have to pick one?